Testing openBIM data exchange for client specific data sets

Introduction As many of you who read this blog regularly know, Bond Bryan Digital promote open standards wherever possible to exchange information between different project stakeholders. The primary methods of exchange we utilise on our projects are PDF, XLSX, IFC, COBie and BCF (and DWG if we really have to!). Many are still sceptical about information exchange using certain formats. This is particularly true of IFC (Industry Foundation Classes) which is described by ISO16739:2013. The truth though is that the IFC schema is not really to blame. The issue is that the exchange is often poor because of either poor implementation of the schema by vendors or poor implementation by users. Over the last few years the Bond Bryan Digital team have spent countless hours understanding information exchange into and out of specific tools. Our collective knowledge means we can use this to feedback issues we find to vendors and also to help users implement the requirements they are required to deliver. In order to understand though what does and doesn’t work it is necessary to fully test information exchange to ensure that data is not lost and is reliable between software solutions. So how do we do this? Well it’s pretty simple really. We can produce robust test files that are built to the align with the employer’s information requirements. This is possible where data requirements have been fully described and is easiest when these have been described around industry standards such as IFC and COBie.

Why create test files? Creating test files allows us to test exchange, particularly data, between various solutions well in advance of the need to hand over the final information for the In Use (RIBA Stage 7) phase of a project. The data we want to exchange needs to be consistent from all tools. We want everyone to use the same approach irrespective of their chosen authoring tool(s). The methodologies for creating open standard data varies between authoring tools but the outputs are the same (or at least should be!). The IFC and COBie test files can be used for many things but currently these are some of the processes we have focussed on:

- Allow testing into model federation/checking software (e.g. Solibri Model Checker or Autodesk Navisworks) to ensure reliable exchange

- Allow us to ensure our model checking rules we create are robust for the project (i.e. all rules should be able to pass the required automated rules)

- Allow for testing and configuration into quantification software (e.g. Exactal CostX, Causeway BIMmeasure, Nomitech CostOS etc) to ensure reliable exchange

- Allow for testing and configuration into data management solutions that will make use of the IFC(s) (e.g. gliderBIM or Clearbox BIMXtra) to ensure reliable exchange

- Allow for testing and configuration into Computer Aided Facilities Management (CAFM) solutions to ensure reliable exchange, often years in advance of final handover of actual project information

- Allow testing into other software solutions required to exchange information

Most importantly though with these files is they allow us to ensure the data exchange is robust and that the right data is being exchanged. The test files allow clients, contractors or others to ensure the files meet their own needs. This means that if there are any deficiencies that the requirements can be adjusted accordingly. The test files can then be updated to match. The advantage of using test files over the actual project files means we are dealing with a cleaner and robust data set and allows us to see any issues far earlier in the project than if we wait for actual project files. The files allow us to ensure that the requirements can be delivered and mean we can help model authors to meet the requirements during the project. It also means authors have something to see what they are aiming for when creating their own files and aids communication when issues do arise.

What do the files test? The test files here are primarily focussed on data. These particular files are not interested in geometry exchange. Geometry exchange tests would need a different set of test files. So the files test we have developed to date test the following:

- Compliance with the IFC schema:

- Element classification (e.g. Door, Wall, Window etc.)

- Predefined Types

- Attributes (e.g. Name, Description etc.)

- Property Sets and Properties

- Base Quantities *

- Classification references (e.g. Uniclass 2015)

- Layers (e.g. in accordance with BS1192:2007+A2:2016 utilizing Uniclass 2015 classification)

- Compliance with the COBie schema

- Contact

- Facility

- Floor

- Space

- Zone

- Type

- Component

- System

- Attribute

- Coordinate

- Compliance with other industry standards such as BS8541-1:2012

- Compliance with client specific requirements

- Additional Property Sets and Properties outside of the IFC or COBie schema

- Specific Zone requirements

- Nomenclature requirements

- Compliance with other specific requirements

- Additional quantities (i.e. in addition to those provided from Base Quantities)

* Testing of quantities is affected by how geometry has been created in the native authoring tools so the testing of Base Quantities is very limited in these data test files.

About the test files When we create files built around standards, we want to deliver them in full compliance with these standards. This means that when we say we utilise IFC and COBie, we want them to fully align (wherever possible) with these schemas. Too often people state that they are using IFC and say they support open standards. Most people however use IFC for geometry exchange but if you open most IFCs they are full of junk data and non-compliant with even the most basic industry standards.  Image: IFCs shouldn’t resemble your kitchen drawers! They should be ordered in line with the defined schema meaning you can always find what you are looking for. So our goal at Bond Bryan Digital is to help others deliver the most robust IFC and COBie that can be delivered with the tools that are utilised on projects. We want to able to open these files in the future and know that they are optimised, robust, reliable and reusable.

Image: IFCs shouldn’t resemble your kitchen drawers! They should be ordered in line with the defined schema meaning you can always find what you are looking for. So our goal at Bond Bryan Digital is to help others deliver the most robust IFC and COBie that can be delivered with the tools that are utilised on projects. We want to able to open these files in the future and know that they are optimised, robust, reliable and reusable.

The testing process Our test process is built around 10 steps. These are as follows:

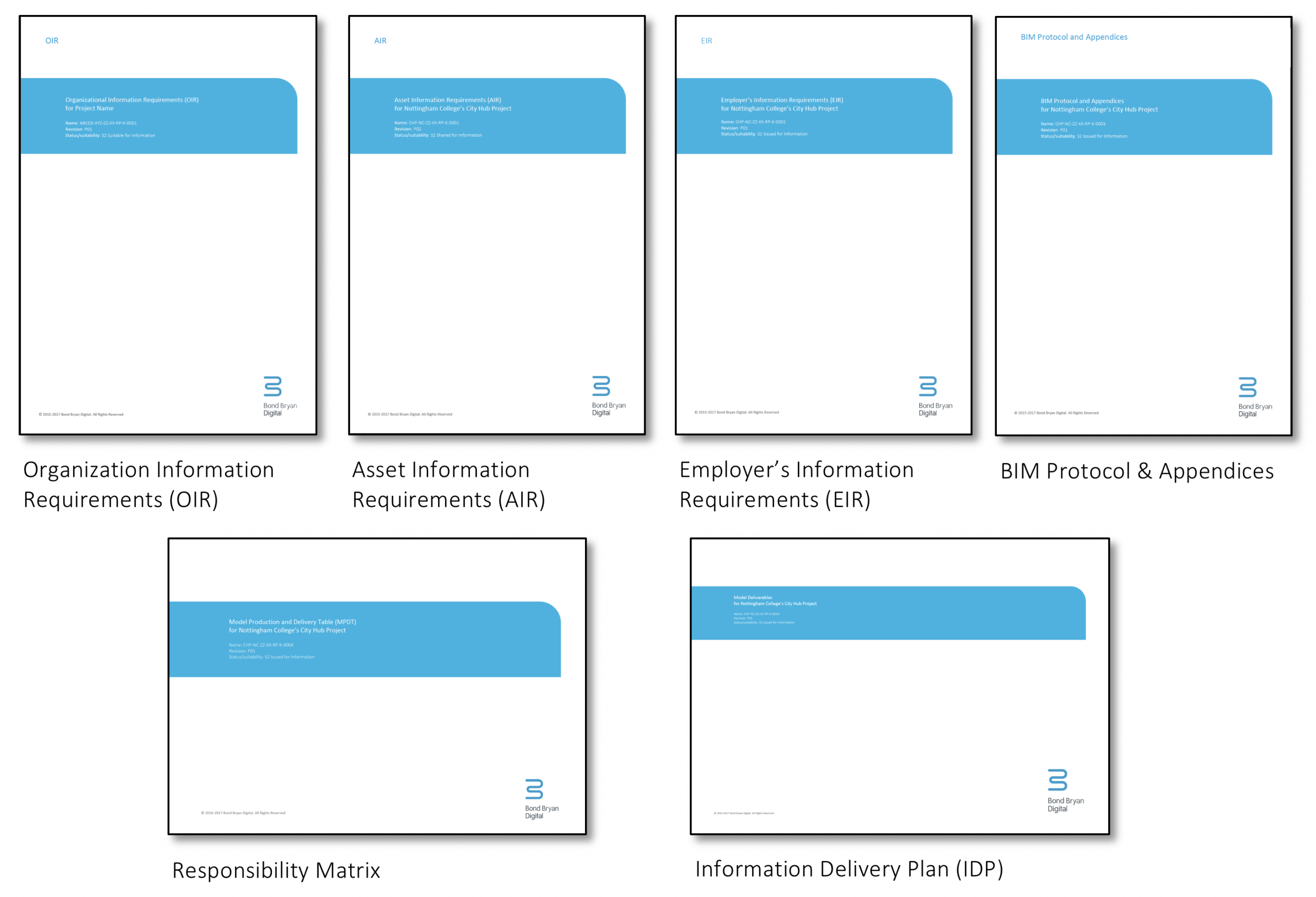

1. Define the information requirements Before we can build a test file we must have detailed information requirements fully defined. We call this phase ‘Information Definition’. Our approach to this is to fully align these requirement documents with current industry standards but particularly open standards such as IFC (ISO16739:2013) and COBie (BS1192-4:2014). Documentation which is key to the testing process include the Asset Information Requirements (AIR) and Employer’s Information Requirements (EIR).  Image: Client documentation defining requirements built around open standards With clear requirements it means we can understand what is and isn’t required. We want to ensure we only transfer the right information to the right stakeholder at the right time. Anything that isn’t required is waste and it is likely that it has not been thoroughly checked. This means that data that is not described in requirements may be wrong and introduce risk to the project if someone uses it.

Image: Client documentation defining requirements built around open standards With clear requirements it means we can understand what is and isn’t required. We want to ensure we only transfer the right information to the right stakeholder at the right time. Anything that isn’t required is waste and it is likely that it has not been thoroughly checked. This means that data that is not described in requirements may be wrong and introduce risk to the project if someone uses it.

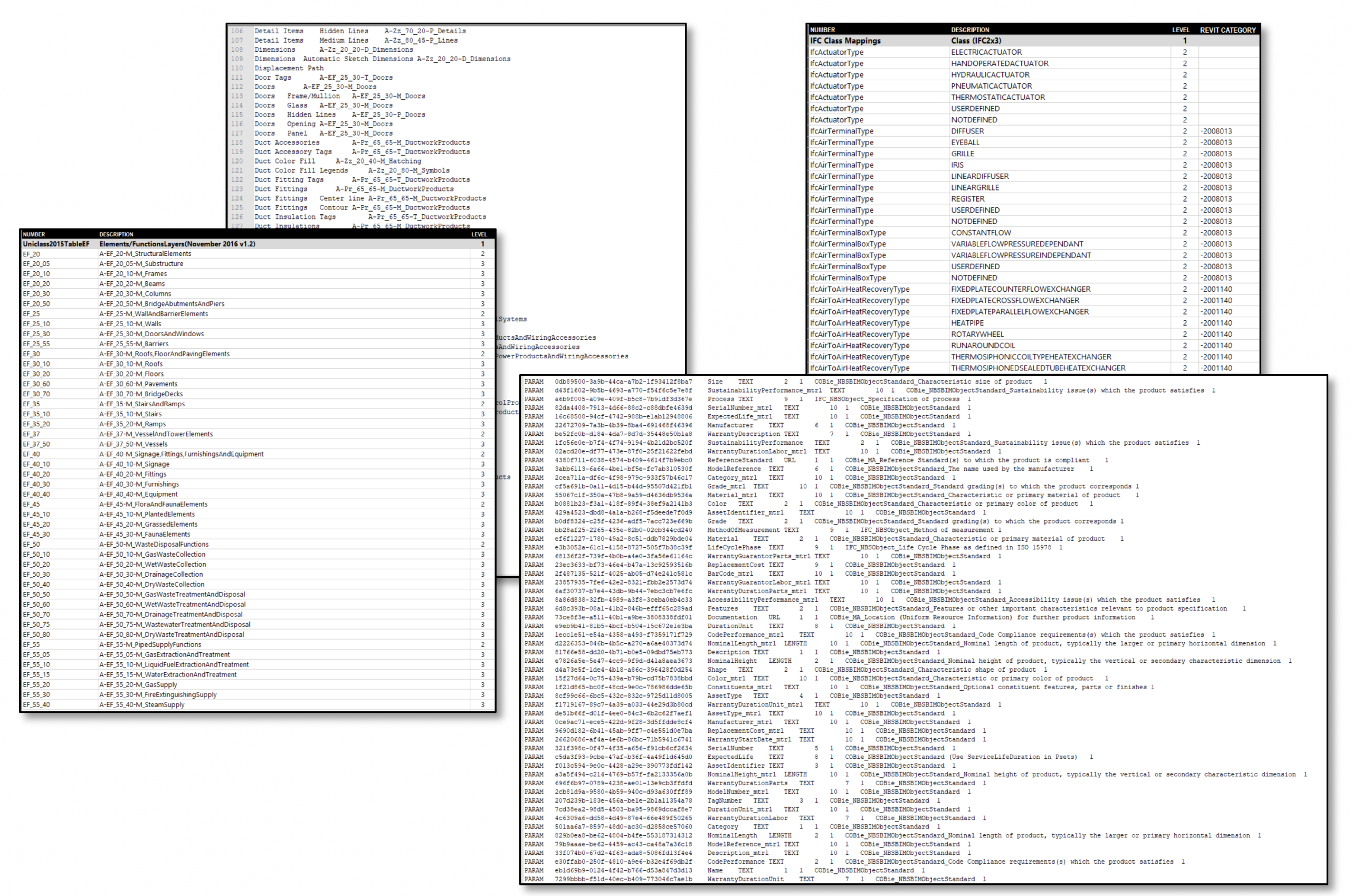

2. Build appropriate resources to support the project delivery Many authoring tools require certain resources to be in place to support project delivery. This includes items such as templates, translators, mapping configurations, classification reference files.  Image: Resources developed for Autodesk Revit for IFC exchange Even with requirements developed around open standards we also need to ensure consistent inputs from model authors. So where possible we can also provide information to authors on where their data needs to be placed in the native tool. As well as these resources we also need to provide clear guidance on how to use these resources. These guidance documents are key to ensuring we get authors to match the same outputs as the test files.

Image: Resources developed for Autodesk Revit for IFC exchange Even with requirements developed around open standards we also need to ensure consistent inputs from model authors. So where possible we can also provide information to authors on where their data needs to be placed in the native tool. As well as these resources we also need to provide clear guidance on how to use these resources. These guidance documents are key to ensuring we get authors to match the same outputs as the test files.  Image: A guide developed for IFC exchange using Autodesk Revit

Image: A guide developed for IFC exchange using Autodesk Revit

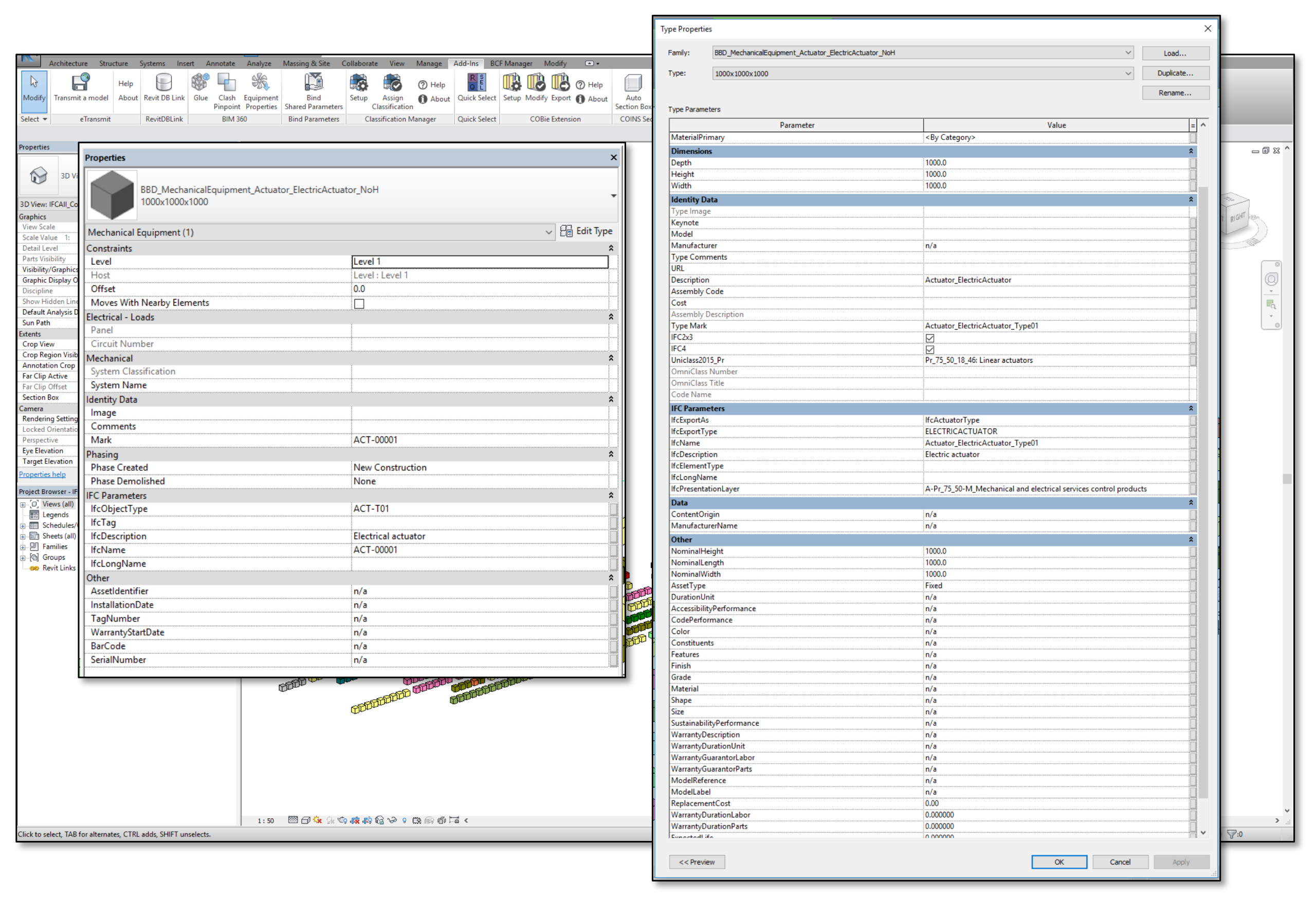

3. Build a native test file that meets the project information requirements Once we have the resources we need, we can build a file in the appropriate native authoring tool. To date we have done this using Autodesk Revit and GRAPHISOFT ARCHICAD. These files have one representation of each element type and sub type with the appropriate data associated to them. The data we are producing for the IFC includes: Project, Site, Building, Building Storey (i.e Floors), Space, Zone, Type Product, Product and associated Properties for each required level of the schema. In the Revit file the geometry is largely represented by simple cubes whilst in the ARCHICAD file the geometry is made up of 3-dimensional text objects. Each piece of geometry represents a different type of building element and carries the required data identified in the employer’s information requirements.  Image: Autodesk Revit native file built to provide a compliant output (click to enlarge)

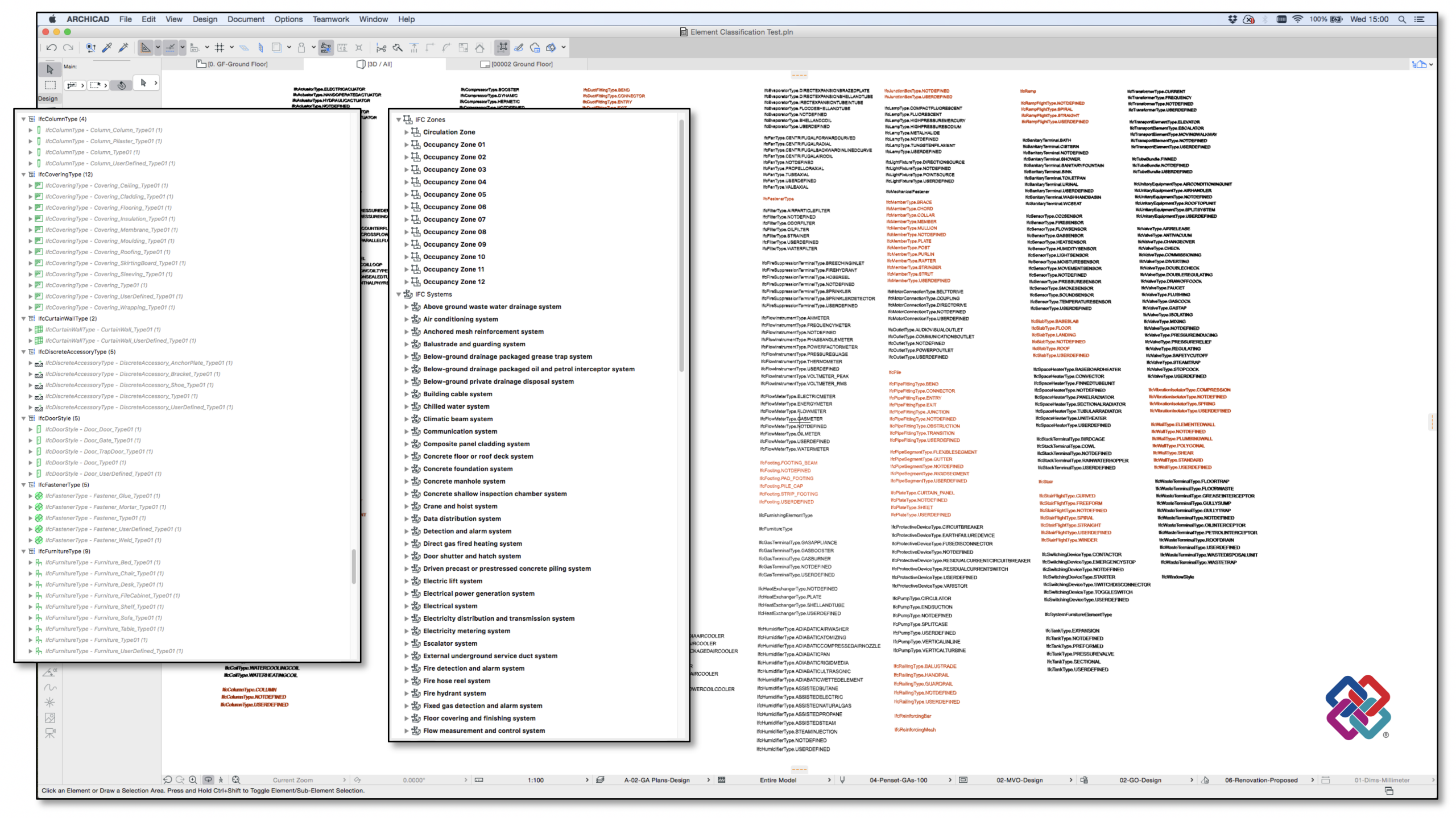

Image: Autodesk Revit native file built to provide a compliant output (click to enlarge)  Image: GRAPHISOFT ARCHICAD native file built to provide a compliant output (click to enlarge)

Image: GRAPHISOFT ARCHICAD native file built to provide a compliant output (click to enlarge)

4. Export an IFC from the authoring tool(s) Once the native file is complete we can then export an IFC. Currently the files we are exporting follow the IFC2x3 schema as most tools support this version. However, our whole approach is future proofed around also being able to deliver to the IFC4 schema in the near future.  Image: IFC (Industry Foundation Classes) is just one of the open standards created and maintained by buildingSMART International. Bond Bryan also utilise COBie and BCF (BIM Collaboration Format) in our workflows.

Image: IFC (Industry Foundation Classes) is just one of the open standards created and maintained by buildingSMART International. Bond Bryan also utilise COBie and BCF (BIM Collaboration Format) in our workflows.

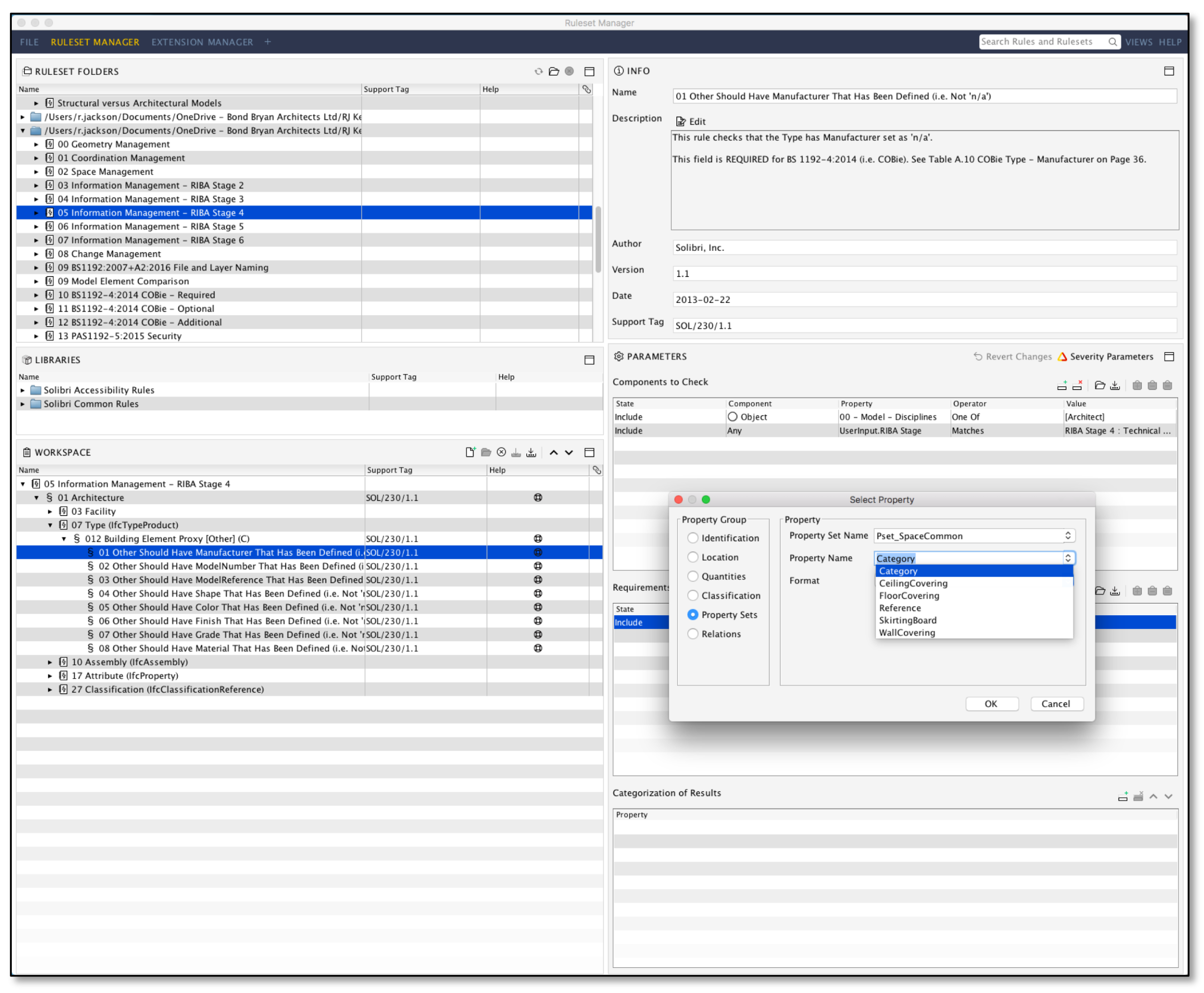

5. Build model checking rules aligned with the information requirements With both the defined requirements for the project and robust test files we can build model checking rules to ensure that the actual project files meet the same requirements. In this particular blog piece we won’t go into detail of model checking but the important thing to understand is that having robust checking procedures means the data will be more reliable for others to use.  Image: Building rules in Solibri Model Checker

Image: Building rules in Solibri Model Checker

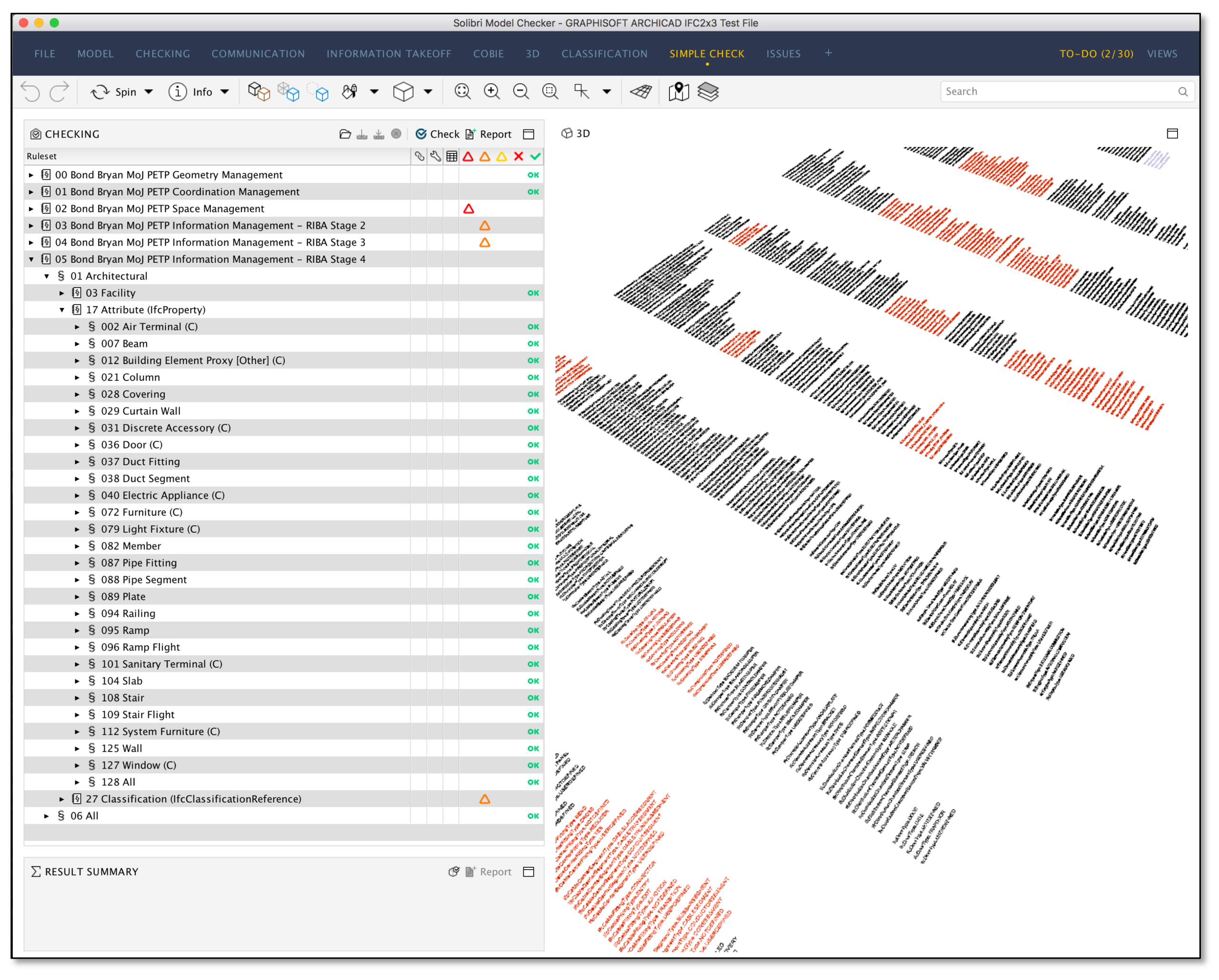

6. Test the IFC(s) for compliance So once the rules have been built we can test the IFCs we have created. This allows us to understand if there are any issues in either the IFC creation, export or even with the model checking rules.  Image: Model checking of the IFC in Solibri Model Checker By using a test dataset it allows us to understand these issues much faster than if we were using actual project files. We can correct the native files and re-export the IFCs or adjust the rules to suit. In some cases, some software is unable to deliver files fully compliant with IFC and COBie. In these instances we report these issues back to vendors for improvement of their products. This has resulted in a number of improvements in various solutions over the last few years. Where a software cannot deliver full compliance with the required standards then we may need to accept that deviation is inevitable. For example, Autodesk Revit can only deliver IfcSystem data for a limited number of element types, so workarounds are necessary for both IFC and COBie. In these instances we agree and document these variances accordingly.

Image: Model checking of the IFC in Solibri Model Checker By using a test dataset it allows us to understand these issues much faster than if we were using actual project files. We can correct the native files and re-export the IFCs or adjust the rules to suit. In some cases, some software is unable to deliver files fully compliant with IFC and COBie. In these instances we report these issues back to vendors for improvement of their products. This has resulted in a number of improvements in various solutions over the last few years. Where a software cannot deliver full compliance with the required standards then we may need to accept that deviation is inevitable. For example, Autodesk Revit can only deliver IfcSystem data for a limited number of element types, so workarounds are necessary for both IFC and COBie. In these instances we agree and document these variances accordingly.

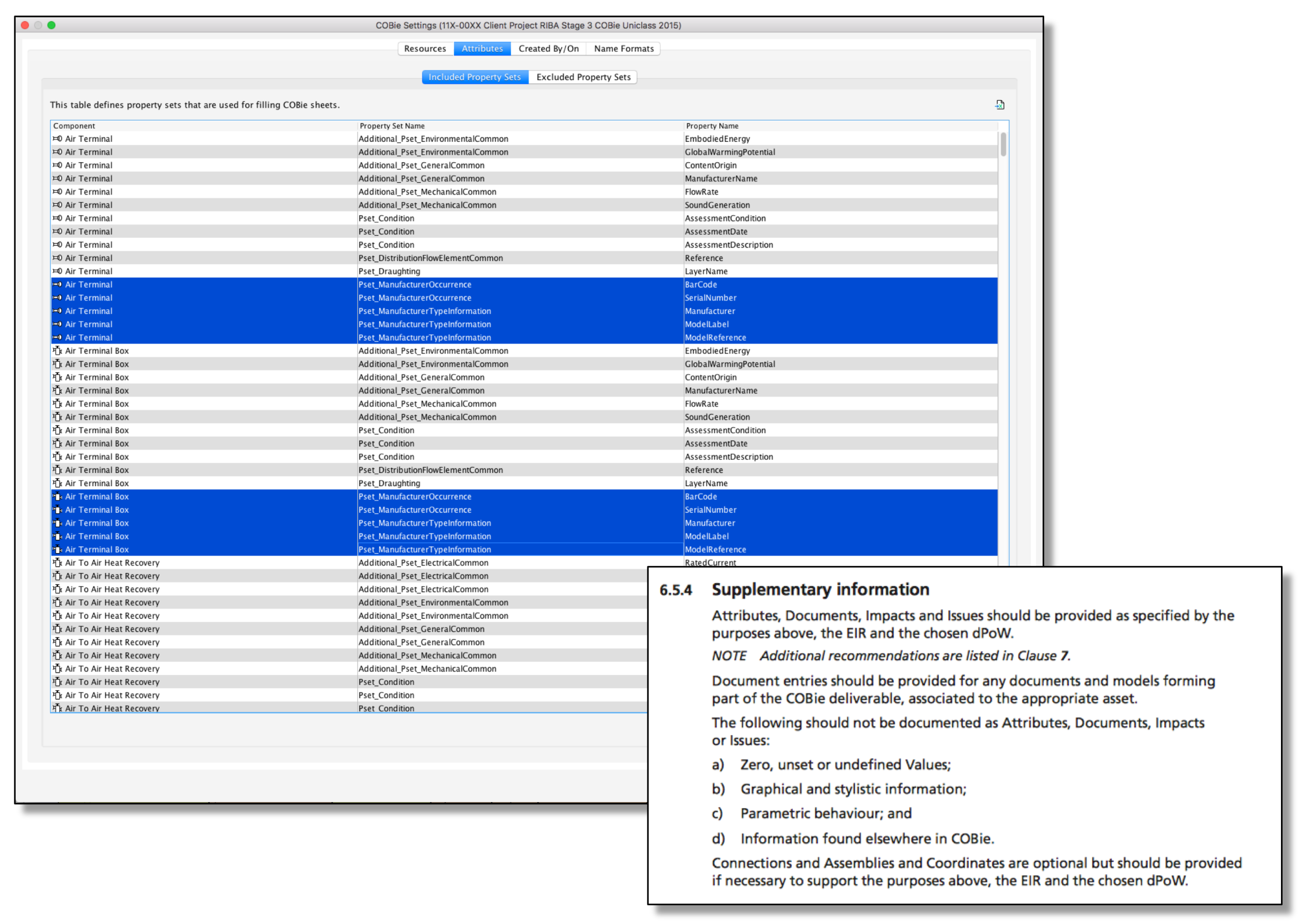

7. Configure COBie creation from IFC(s) Once we have a compliant IFC we can then generate a COBie output from the IFC. Tools vary how they do this but with Solibri Model Checker we are able to configure the COBie output as required for the project. This might include adjusting the element types to be included/excluded or changing where the data is coming from (e.g. System data from Autodesk Revit). We can also make sure that we only include the properties that are required for the COBie Attribute data. This ensures we only include the required data and ensures an optimised output with data that has been validated and verified.  Image: COBie Attribute configuration in Solibri Model Checker and the requirements specified by BS1192-4 (COBie) regarding Attributes

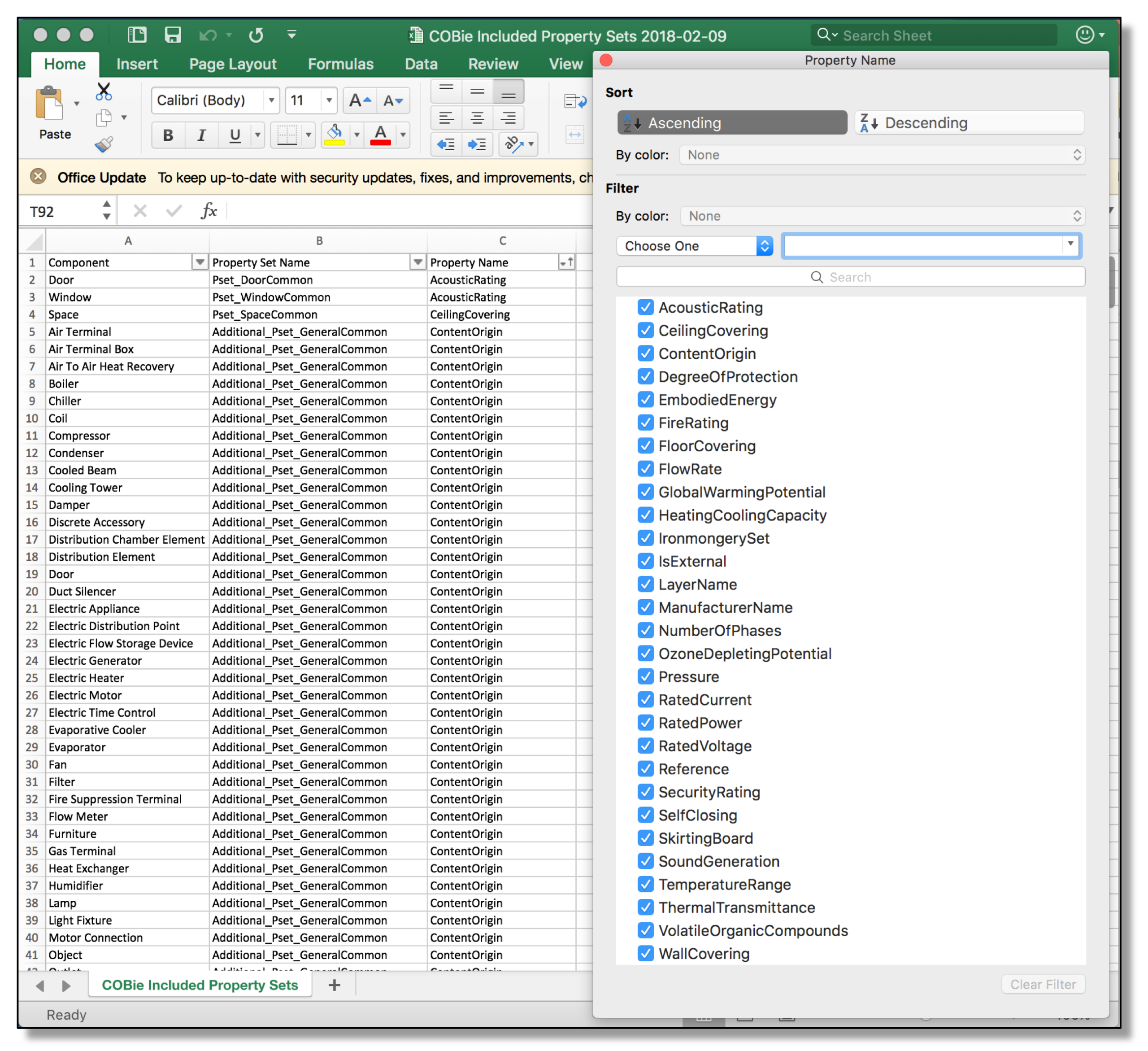

Image: COBie Attribute configuration in Solibri Model Checker and the requirements specified by BS1192-4 (COBie) regarding Attributes  Image: COBie Attributes optimised when exported to Microsoft Excel – this means only the required properties are included

Image: COBie Attributes optimised when exported to Microsoft Excel – this means only the required properties are included

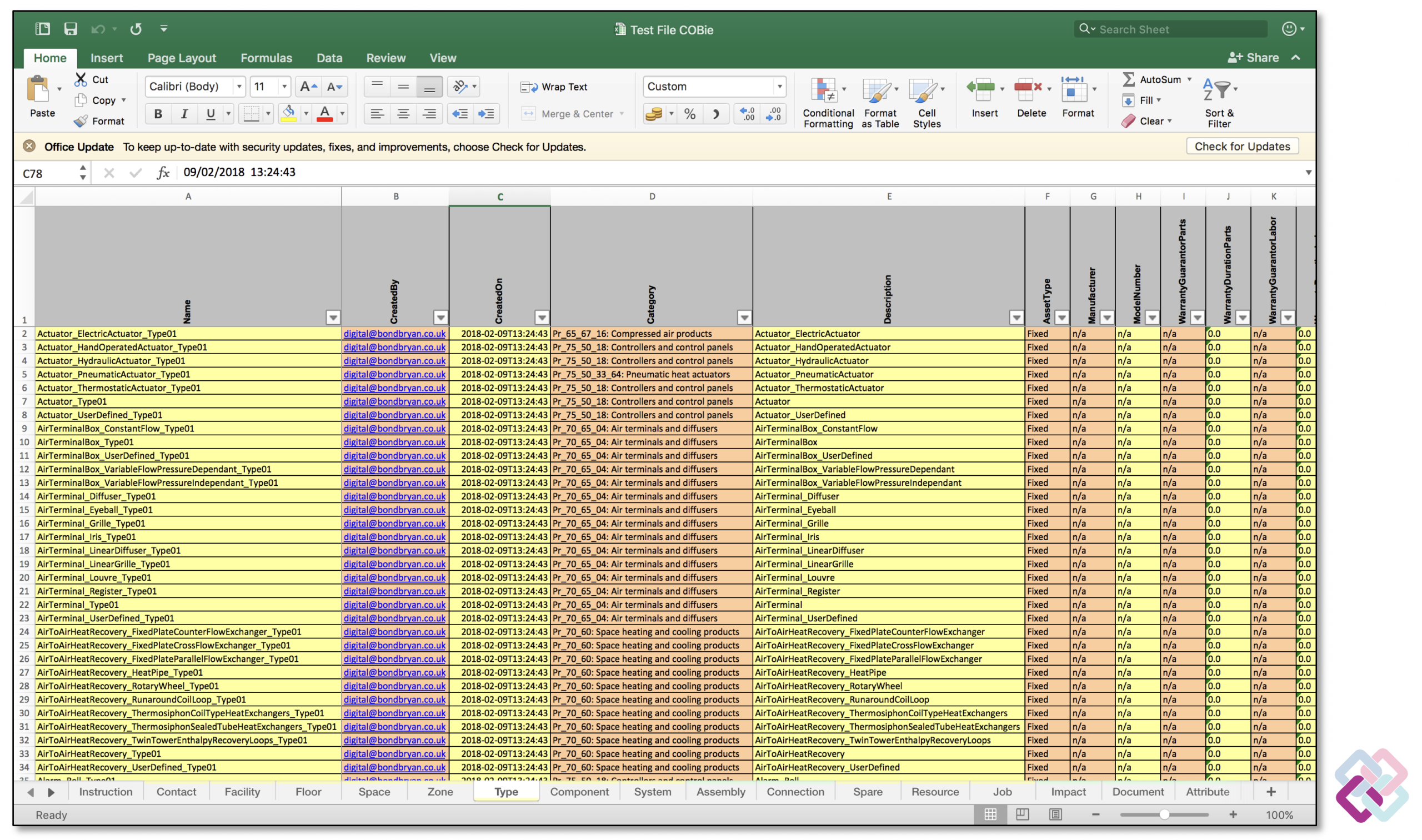

8. Create COBie from compliant IFC(s) So once we have completed the configuration process we can create a COBie output. The output here includes the following information directly from the IFC: Facility, Floor, Space, Zone, Type, Component, System, Attribute and Coordinate. We currently use Contact data that has been manually created in Microsoft Excel. This is because there are currently some limitations with both Autodesk Revit and Solibri Model Checker. This data however can easily be combined at the point of COBie creation from Solibri Model Checker. We could also include Assembly data if required but we have yet to have a client identify a need for this data.  Image: COBie (Construction-Operations Building information exchange) created from the IFC (Industry Foundation Classes) (click to enlarge)

Image: COBie (Construction-Operations Building information exchange) created from the IFC (Industry Foundation Classes) (click to enlarge)

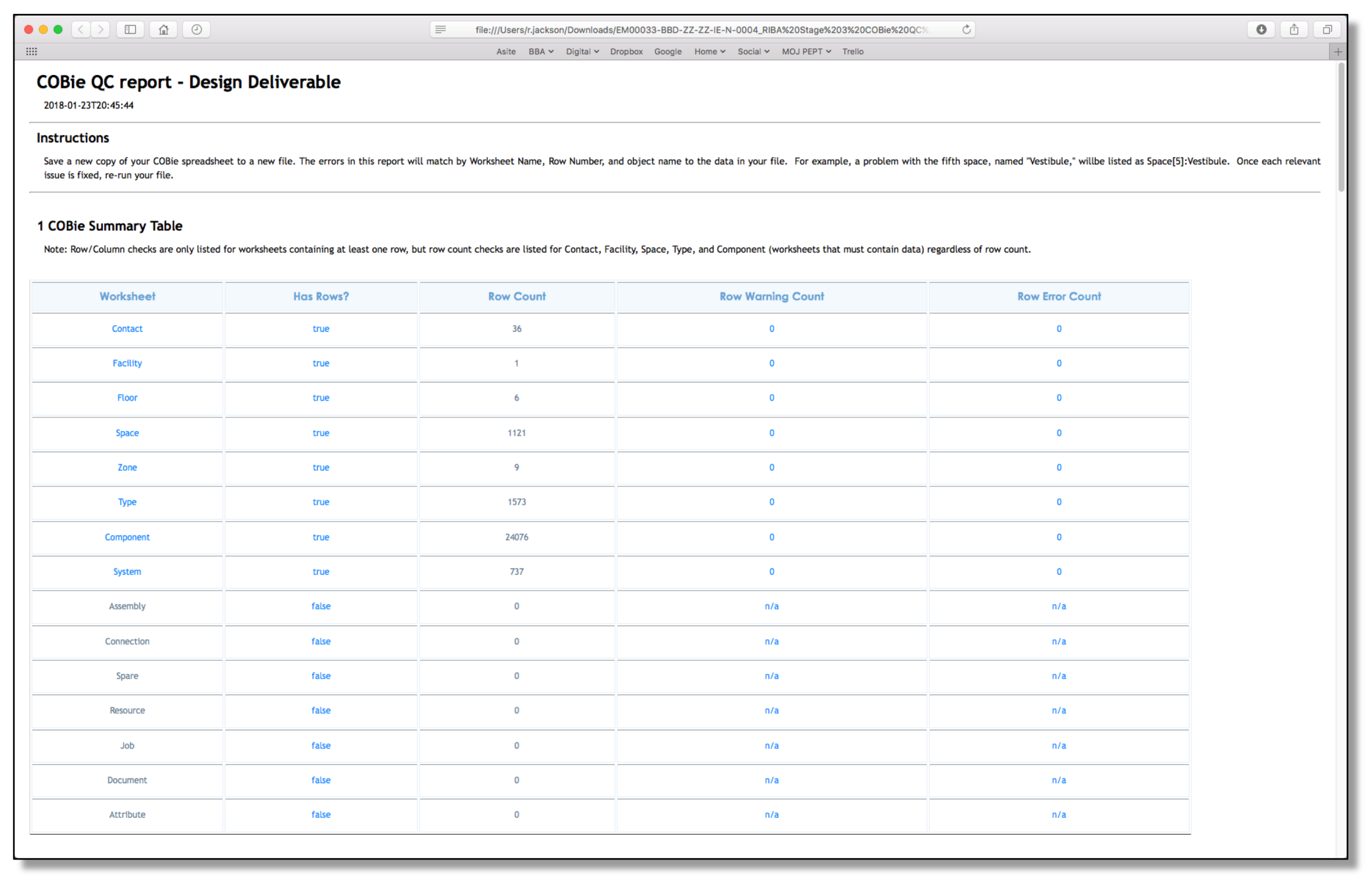

9. Test COBie for compliance using the COBie QC Reporter Solibri Model Checker (and other tools on the market) allow us to create COBie as an XLSX file. In order to demonstrate compliance with the COBie schema we use the COBie QC Reporter to check the XLSX file. This tool is freely available here: https://github.com/OhmSweetOhm/CobieQcReporter/releases A book is also available, written by Bill East (the creator of COBie) and Alfred Bogen, to understand this process, which is available here: http://www.lulu.com/gb/en/shop/e-william-east-and-alfred-c-bogen/construction-operation-building-information-exchange-cobie-quality-control/paperback/product-22947013.html This tool currently checks some data that Solibri cannot check. E.g. unique Type Names. So we use Solibri Model Checker and the QC Reporter in tandem to provide the highest quality output. Even with a fully compliant Solibri we would still continue to use the QC Reporter to provide formal evidence of compliance with the COBie schema.  Image: COBie QC report – the aim is to have a zero error count in the right hand column (click to enlarge)

Image: COBie QC report – the aim is to have a zero error count in the right hand column (click to enlarge)

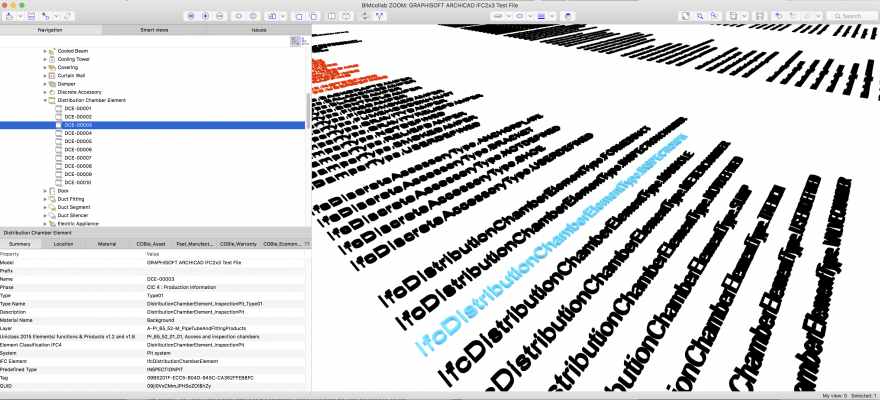

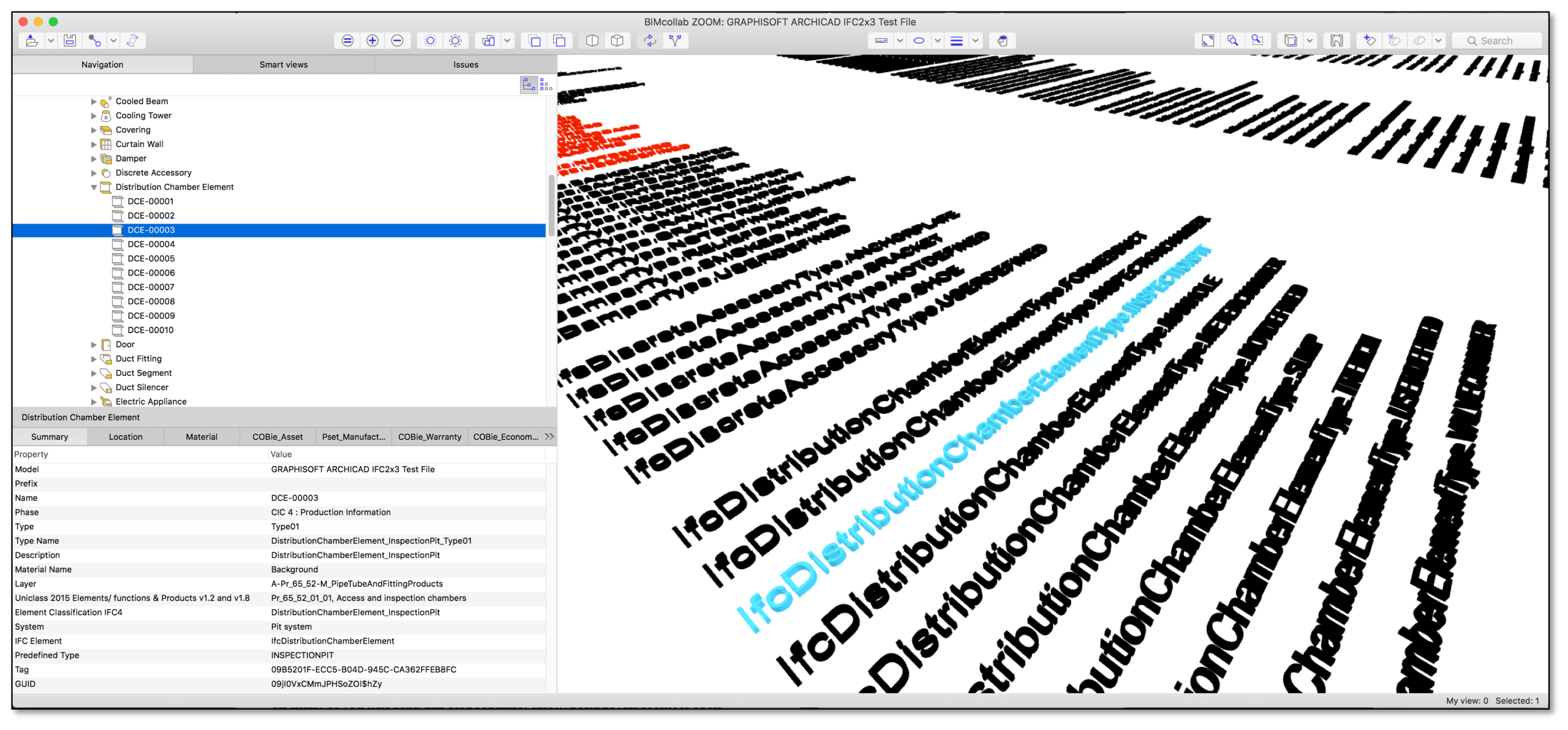

10. Use the IFC and/or COBie to test required software solutions Now that the IFC and COBie have been fully tested they can be used by others to test, and configure their software solution as required. They can make sure that any configurations that are required are done well in advance of receiving the files they will ultimately receive. As mentioned earlier it also allows any missing requirements to be identified earlier in a project. The process also allows any deficiencies in the receiving software to be identified. Again this may mean adjusting the information requirements to suit. Equally this process may identify that a software solution simply isn’t suitable to use the datasets. This might for example mean that COBie won’t exchange directly into the proposed CAFM solution. With the test files these issues will be known well before handover and allow the CAFM solution to fix the issues, a workaround to be developed or more radically for a Facilities Management team to change solutions completely. IFCs can be opened in viewing software such as KUBUS’s BIMcollab ZOOM.  Image: IFC opened in BIMcollab ZOOM (click to enlarge) With a correct approach to IFC, geometry and data can be transferred from one authoring tool to another. Below is an example of the IFC created using Autodesk Revit and then opened in GRAPHISOFT ARCHICAD. All the geometry and data is transferred and appears in ARCHICAD’s IFC Project Manager exactly the same as if the same data had been created natively. Both the geometry and data can be then edited if required and a new IFC created if needed.

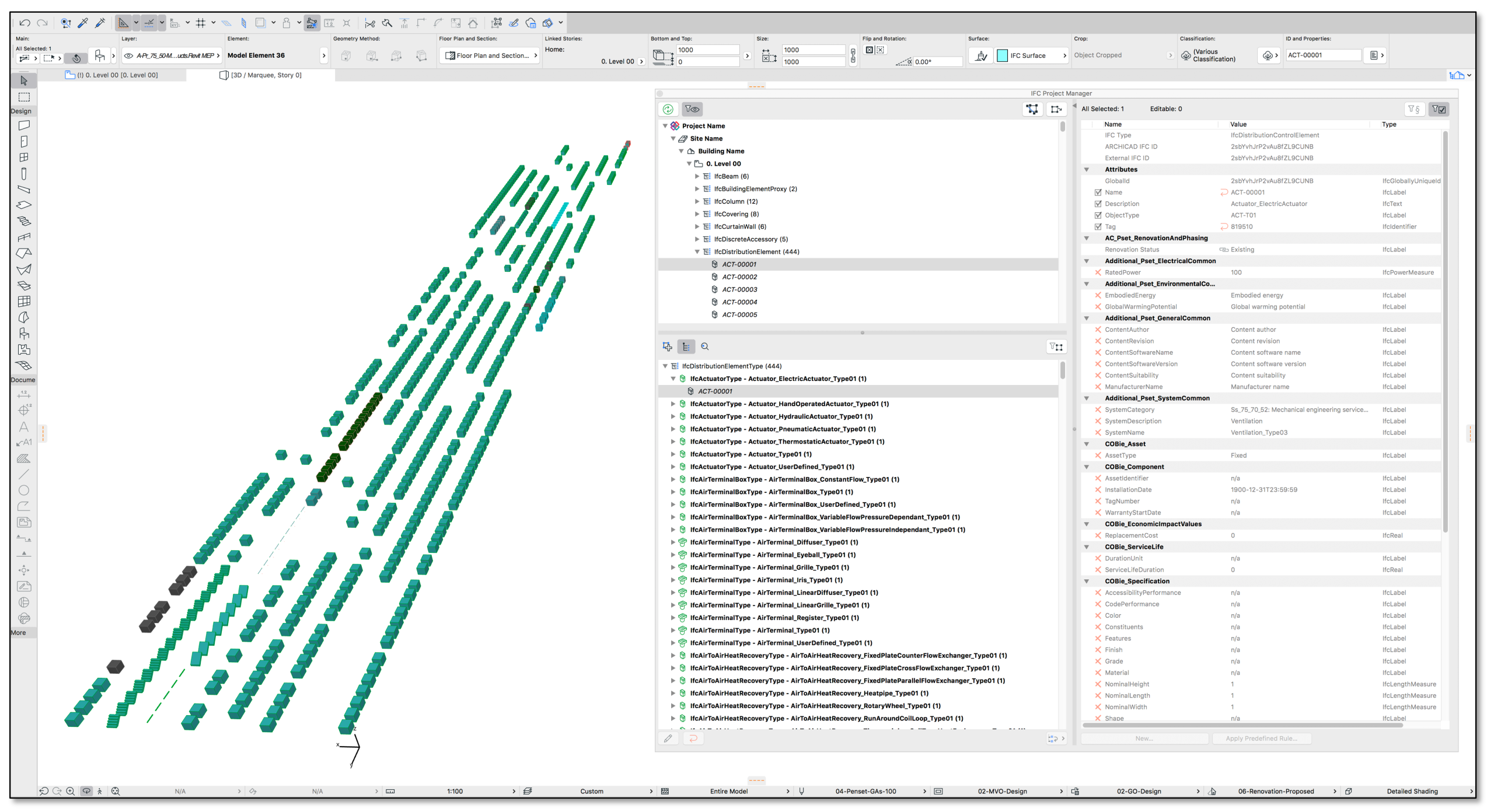

Image: IFC opened in BIMcollab ZOOM (click to enlarge) With a correct approach to IFC, geometry and data can be transferred from one authoring tool to another. Below is an example of the IFC created using Autodesk Revit and then opened in GRAPHISOFT ARCHICAD. All the geometry and data is transferred and appears in ARCHICAD’s IFC Project Manager exactly the same as if the same data had been created natively. Both the geometry and data can be then edited if required and a new IFC created if needed.  Image: IFC created with Autodesk Revit opened in GRAPHISOFT ARCHICAD (click to enlarge) Bond Bryan have used this workflow for taking surveyors models provided as IFC (originally created in Autodesk Revit) to then develop further for design. This workflow is called ‘Design Transfer’ in IFC4 and will improve as vendors implement IFC4 and users request more transfer functionality to buildingSMART. The point though is this already happens today and is only really efficient and effective where consistent open standards are utilised by all stakeholders. This openBIM workflow is key to ensuring we all can use the tools we want to and ensuring that the geometry and data can be reused both in the short and long term.

Image: IFC created with Autodesk Revit opened in GRAPHISOFT ARCHICAD (click to enlarge) Bond Bryan have used this workflow for taking surveyors models provided as IFC (originally created in Autodesk Revit) to then develop further for design. This workflow is called ‘Design Transfer’ in IFC4 and will improve as vendors implement IFC4 and users request more transfer functionality to buildingSMART. The point though is this already happens today and is only really efficient and effective where consistent open standards are utilised by all stakeholders. This openBIM workflow is key to ensuring we all can use the tools we want to and ensuring that the geometry and data can be reused both in the short and long term.

Final thoughts Building test files isn’t essential to the success of a project utilising BIM processes but it does provide a more robust process to ensure reliable data exchange and ensure that the data meets all stakeholders needs well before it may become an issue later in a project. All too often we hear about data not being used far too late in a project stage. This doesn’t allow us to correct the processes and this means often the best of intentions to make the most of BIM are abandoned for slower traditional processes. Implementing a testing regime, before project discipline models are produced, means that having robust (quality controlled), reliable (no loss of required data) and reusable (built to open standards) data becomes far more of a certainty for projects. This ultimately means all stakeholders have more trust and belief in the data they are receiving. With this trust they are more likely to be able to increase their use of the data to improve their own data outputs. Better outputs can ultimately lead of course to better outcomes for our built assets. Of course the test files is just another part of developing robust processes that are repeatable from one project to the next. The next challenge is to get authors to deliver the same high quality outputs as the test files but this is far easier when you know its actually achievable before you start!

Rob Jackson, Director, Bond Bryan Digital Ltd

Terms and conditions

This policy is subject to change at any time.